2024

Mood Monitoring with Speech Emotion Recognition

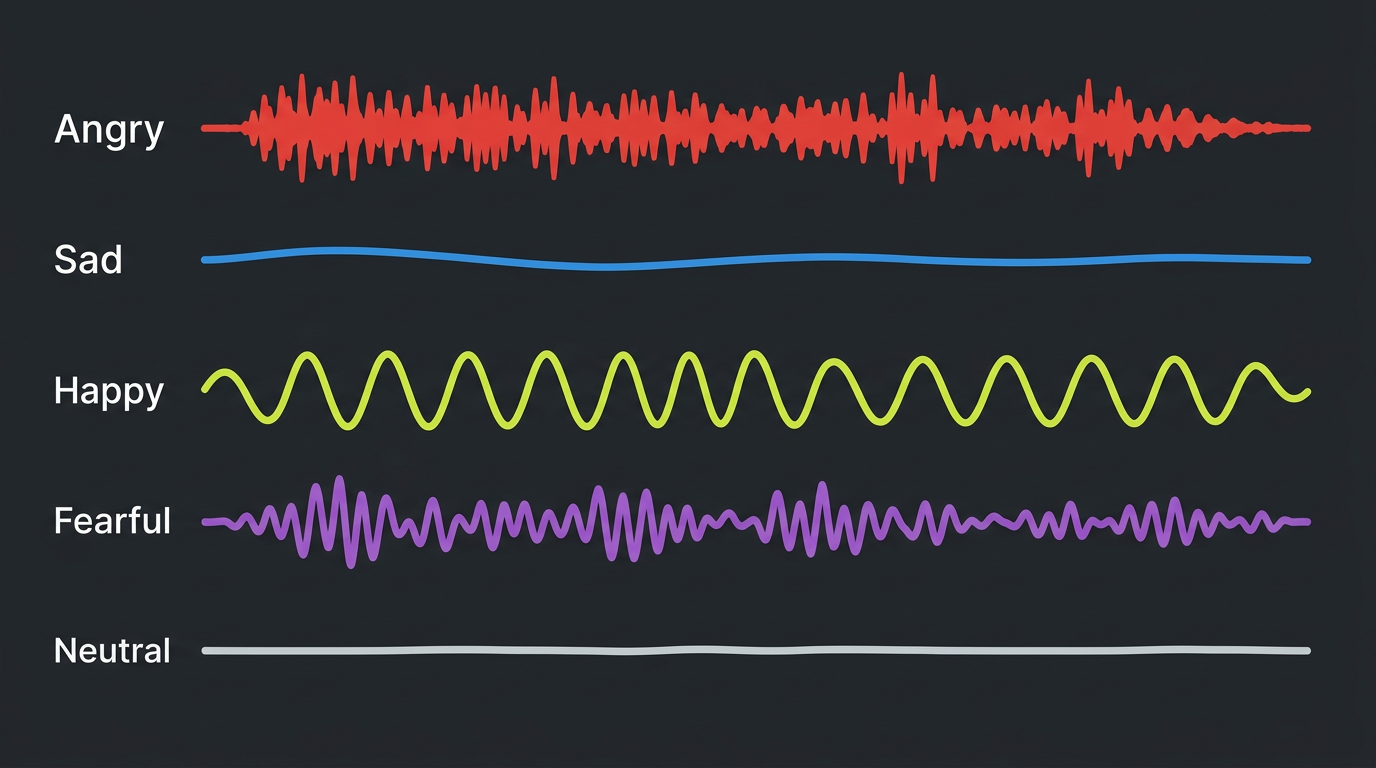

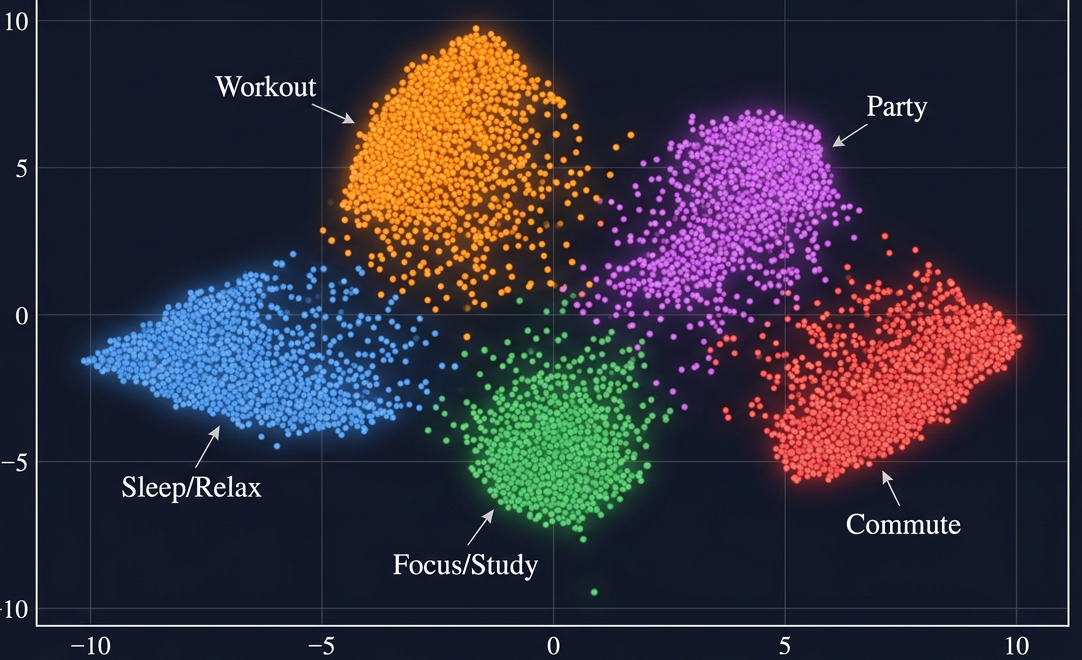

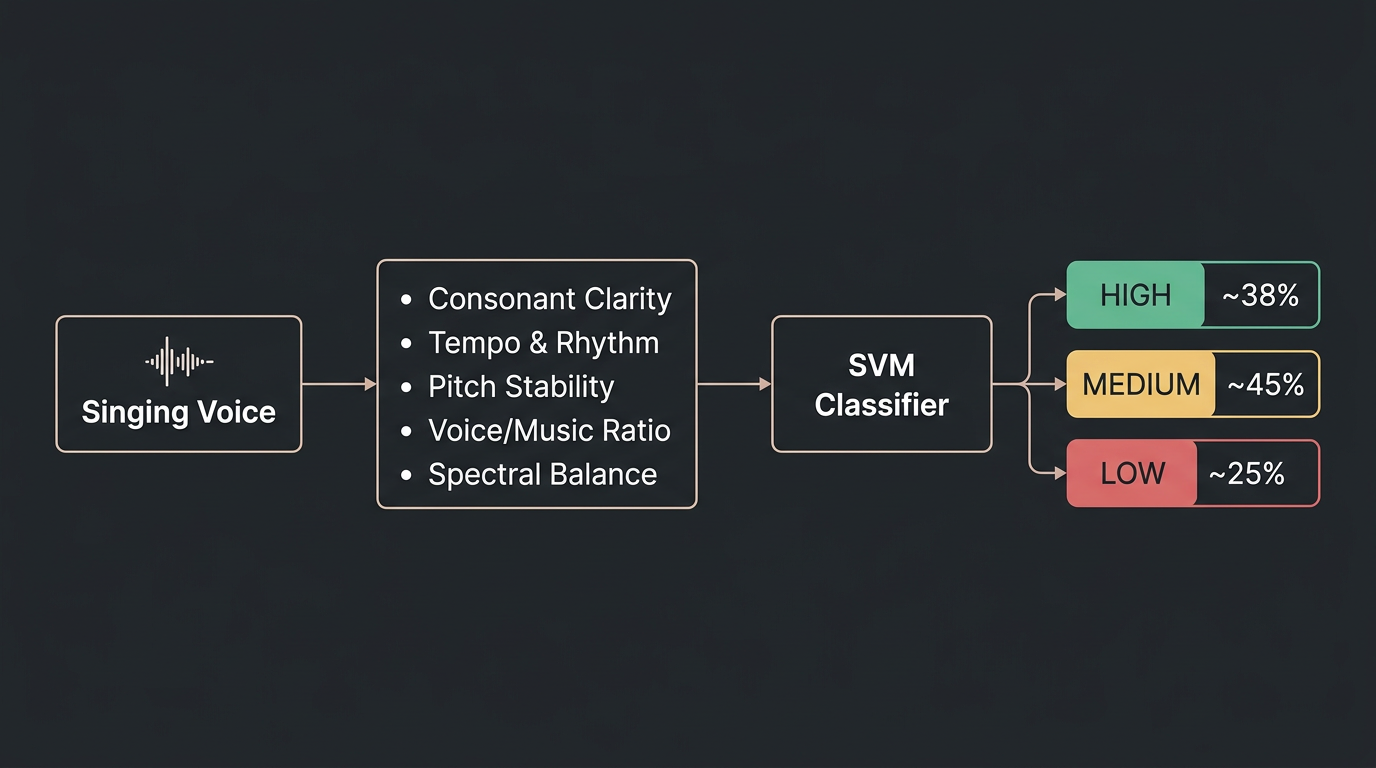

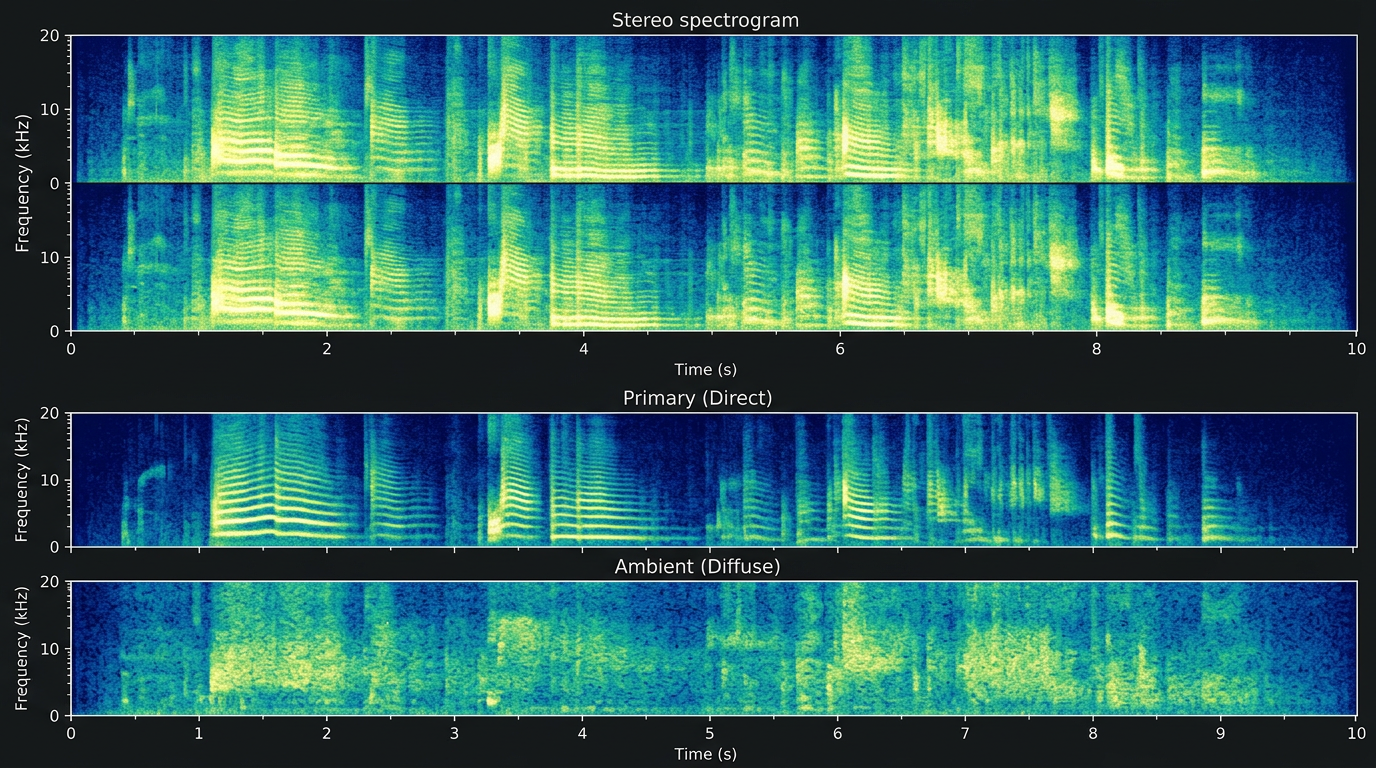

At Emobot, I led research on automatic speech emotion recognition — pushing accuracy significantly through synthetic data augmentation via emotion conversion, with direct impact on a real-time healthcare application.